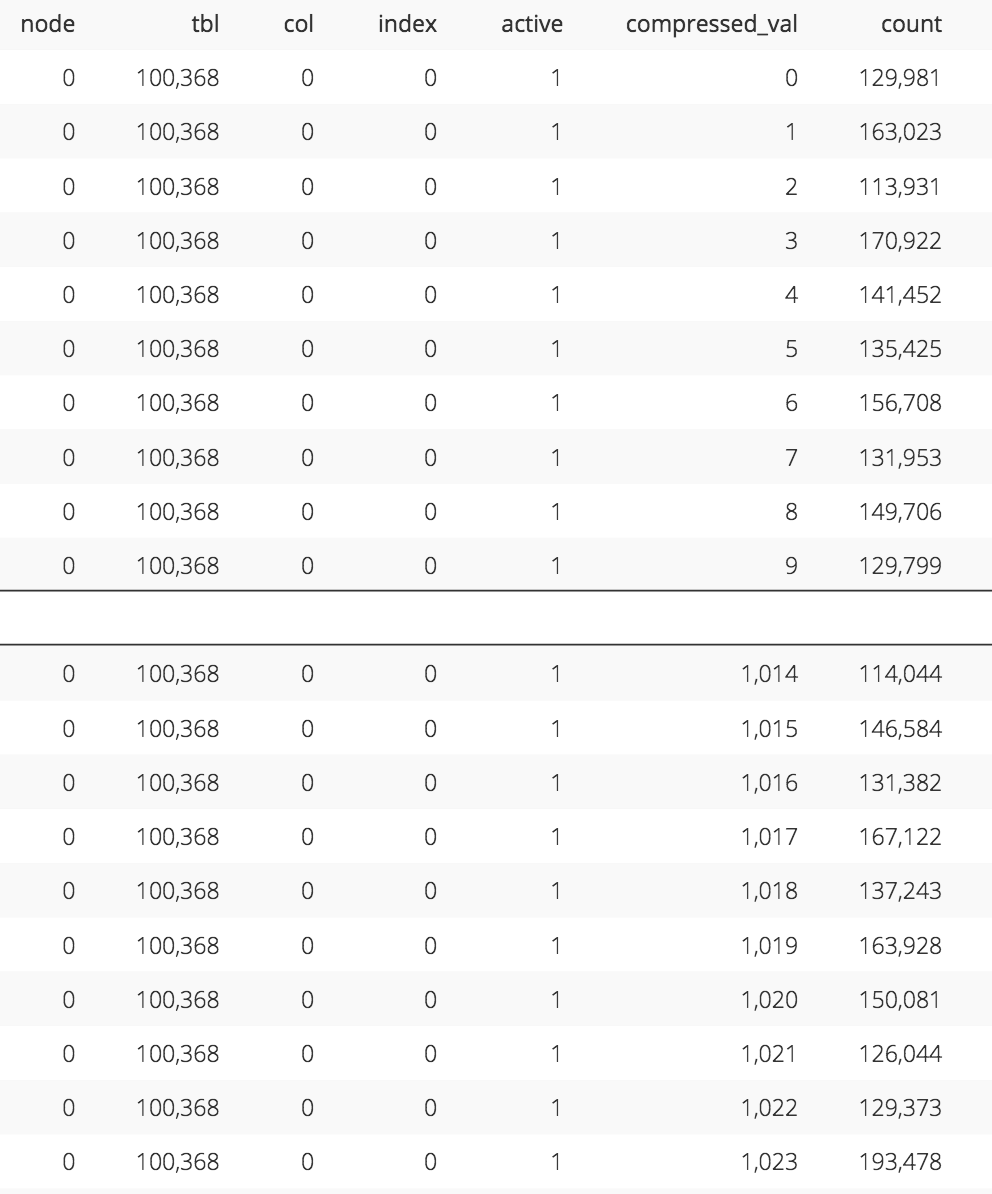

This is used by the optimizer to skip over blocks based upon the query where clause. The diagram above illustrates the method used by Redshift which is based on sorting data during load to maximize read performance, in this case by TEAM and then CITY.Īs data is loaded it’s sorted by a SORT KEY, and the minimum and maximum value recorded for each 1Mb block. Instead, the data is physically stored to maximize query performance using SORT KEYS. On Redshift, there’s no need to devise an indexing strategy or drop and rebuild indexes around batch ETL loads, as Redshift does not support traditional indexes. Even the Bitmap Index, specifically designed for analytic query performance leads to significant concurrency issues when maintained by multiple writers, and is often disabled prior to bulk load operations. While a B-Tree supports rapid access for both direct lookup and scan operations, it’s a major cause of locking contention issues when bulk loading data, which can lead to performance issues. Sort Keys and IndexesĮver since Bayer and McCreight first proposed the B-Tree index in 1972 it has been the primary indexing method used by almost every database, although database designers must carefully balance a trade-off of better read performance and write throughput. Of course, the extent to which the query slice can run independently in parallel depends upon the extent to which the workload can be balanced, and the remainder of this article explains how this can be achieved using Sort Keys and Distribution Keys. This means any given query can be executed in parallel across multiple cores reading multiple disks, thus maximizing throughput. When a query is executed, the leader node breaks up the task into a number of parallel steps, executed by the Compute Nodes which actually store the data, and perform the heavy lifting. This method maximizes parallel execution and supports scalability as the system can be migrated to a larger cluster with additional nodes. When data is loaded, it’s distributed across each compute node in the cluster as a series of slices, where each slice corresponds to a CPU core, memory allocation, and disk space. The diagram below illustrates how every query is submitted to the Leader Node which is responsible for parsing the query, determining the best execution plan, and coordinating and aggregating results. System Architectureīefore diving into the detail it’s worth giving an overview of how Redshift is internally architected. While there are few options available to tune or customize the database, it’s absolutely critical to correctly design the physical table layout to maximize performance.

Amazon Redshift is (for the most part) a Data Warehouse as a service, and there’s no need to provision hardware, install databases or patches with few options to tune the system.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed